Welcome back to CS201, the course where your code keeps getting closer to the machinery, and the machinery keeps revealing that it has been multitasking, negotiating, interrupting, rescheduling, and quietly judging everyone this entire time.

So far, we have watched programs go from source code to running behavior in CS201 Episode 1 — How a Computer Actually Runs a Program. We learned how values become bits in CS201 Episode 2 — Bits, Bytes, and Data Representation. We went lower into logic gates in CS201 Episode 3 — Logic, Gates, and Digital Circuits, then climbed back up into instructions, registers, and memory in CS201 Episode 4 — Assembly Language and the Machine Model. Last time, in CS201 Episode 5 — CPU Architecture and Performance Basics, we looked at why processors are fast, weird, and occasionally suspiciously clever.

Now we arrive at the part where the machine stops doing one thing at a time.

Or, more accurately, where it creates the illusion that many things are happening at once, while several layers of hardware and software take turns rapidly enough that everyone agrees not to scream.

This episode is about processes, threads, and concurrency.

And yes, this is the chapter where many perfectly reasonable programs begin acting like raccoons found the electrical panel.

The basic problem: one computer, many jobs

A modern computer is almost never doing one thing.

Even when you are “just” typing in a document, the machine may also be:

- checking the network

- refreshing the display

- playing audio

- syncing files

- running background services

- managing memory

- scanning for updates

- waiting for input

- handling notifications

- pretending it is not bothered by your 47 browser tabs

The computer feels like one smooth machine because the operating system coordinates all of this.

That coordination is one of the great practical illusions of computing. Not a dishonest illusion, exactly. More like stage magic performed by an overworked stage manager with a stopwatch.

In CS101 Episode 3 — Loops and Functions, we learned that a program can repeat work without copying the same line forever like a haunted intern. In CS102 Episode 13 — State, Bugs, and Program Behavior, we saw that state can make programs behave differently over time.

Concurrency combines both ideas and adds a new ingredient:

More than one flow of work can be in progress at the same time.

This is powerful.

This is necessary.

This is also how your program can pass every test on Tuesday and explode on Wednesday because two operations arrived in the opposite order and decided to redecorate memory with a hammer.

Concurrency is not always parallelism

Before we go further, we need to clean up two words that often get tossed into the same drawer.

Concurrency means multiple tasks are in progress during the same overall period.

Parallelism means multiple tasks are literally executing at the same instant.

Those are related, but not identical.

A single-core CPU can be concurrent. It can switch rapidly between tasks:

Task A runs briefly.

Task B runs briefly.

Task C runs briefly.

Task A resumes.

Nobody gets the whole kitchen forever. Everyone gets a turn at the stove.

A multi-core CPU can be parallel. Core 1 runs Task A while Core 2 runs Task B at the same time.

That is real simultaneous execution.

The distinction matters because concurrency is about structure. Parallelism is about execution.

You can write concurrent software that does not run in parallel. You can also write software that runs in parallel and still performs badly because everyone is waiting for the same lock, disk, network, database, or tiny shared variable that has somehow become the mayor.

What is a process?

A process is a running instance of a program.

Not the program file sitting on disk.

Not the source code.

Not the app icon looking innocent.

A process is the program after the operating system has loaded it into memory, given it resources, and said, “Fine, you may exist.”

A process typically has:

- its own memory space

- its own execution state

- open files or network connections

- security permissions

- one or more threads

- a process ID

- a relationship with the operating system scheduler

The important beginner-friendly idea is this:

A process is a protected container for a running program.

Your browser is a process. Your code editor is a process. Your terminal is a process. Your local development server is a process. Some applications use many processes because apparently one sandbox was not enough emotional support.

The operating system tries to keep processes isolated from each other. If one program crashes, the whole machine should not collapse like a folding chair at a county fair.

That isolation is one of the operating system’s major jobs.

It protects memory. It controls permissions. It manages files. It decides which process runs next. It prevents one process from casually wandering into another process’s private memory and rearranging the furniture.

This connects directly to CS102 Episode 9 — Memory and the Machine. Memory is not just “a place where values live.” In real systems, memory is also guarded territory.

A process gets its own address space. That means the addresses it uses are not simple physical addresses nailed directly to the hardware like tiny brass nameplates. They are usually virtual addresses, translated and managed by the operating system and hardware working together.

The result is that Process A can believe it owns address 0x1000, and Process B can also believe it owns address 0x1000, and both can be correct because the operating system is playing a very serious game of “you each get your own pretend universe.”

Thank goodness.

What is a thread?

A thread is a sequence of execution inside a process.

If a process is the container, a thread is one path of activity inside that container.

A process can have one thread.

Many beginner programs do.

The program starts. It runs line by line. It does things. It exits. Very civilized. Possibly wearing a cardigan.

But a process can also have multiple threads.

Those threads share the process’s memory, files, and resources, while each thread has its own execution state: its own stack, instruction pointer, and registers at a given moment.

That shared memory is the blessing and the curse.

It is a blessing because threads can communicate quickly. They can work together on shared data without going through expensive process-to-process communication every time they need to coordinate.

It is a curse because threads can also step on each other’s toes, change data out from under each other, and create bugs that appear only when the timing is exactly wrong, the moon is emotionally available, and your demo starts in four minutes.

That is not a joke.

Well, it is a joke.

But it is also your future.

A tiny example: the shared counter problem

Suppose two threads both update the same counter.

You might imagine this operation is simple:

counter = counter + 1

Looks innocent.

A tiny little line. What could go wrong?

Plenty.

That line is not necessarily one machine-level action. It often breaks down into smaller steps:

- Read the current value of

counter. - Add 1.

- Store the new value back.

Now imagine two threads doing that at the same time.

The counter starts at 10.

Thread A reads 10.

Thread B reads 10.

Thread A adds 1 and gets 11.

Thread B adds 1 and gets 11.

Thread A stores 11.

Thread B stores 11.

Two increments happened.

The final value is 11.

Congratulations, one increment has vanished into the mist like a sock in a dryer with root access.

The correct final value should have been 12.

This is called a race condition.

A race condition happens when the correctness of a program depends on the timing or ordering of events that are not reliably controlled.

That phrase should make your developer instincts sit up straight and stop chewing on the furniture.

Why race conditions are so frustrating

Race conditions are frustrating because the code can look correct locally.

It can pass tests.

It can work 999 times.

Then on the 1000th run, two threads line up in just the wrong way and suddenly your account balance, shopping cart, sensor reading, game state, analytics count, or medical device telemetry decides to freestyle.

The problem is not that the computer is random.

The problem is that the scheduling order is not something your code should assume unless you explicitly control it.

The operating system scheduler decides when threads run, pause, resume, or wait. That decision can depend on CPU load, timers, interrupts, I/O, priority, system calls, available cores, and the general social weather inside the machine.

Your code may say:

deposit()

withdraw()

But in a concurrent system, the actual interleaving of lower-level operations might be more like:

Thread A reads balance

Thread B reads balance

Thread A computes new balance

Thread B computes new balance

Thread A writes balance

Thread B writes balance

The human saw two clean function calls.

The machine saw a tiny parade of operations, and several of them wandered into traffic.

Scheduling: the operating system’s turn-taking system

A scheduler decides which thread or process gets CPU time.

This matters because CPUs are fast, but they are not infinite. Even with multiple cores, there are usually more runnable tasks than available execution slots.

The scheduler gives each task a chance to run.

Sometimes a task runs until it blocks, meaning it cannot continue until something happens, like:

- waiting for disk input

- waiting for a network response

- waiting for a lock

- waiting for user input

- waiting for a timer

- waiting for a database query

- waiting for some other service to stop being dramatic

Sometimes a task is preempted, meaning the operating system pauses it so another task can run.

Preemption is one reason your computer remains usable while different programs compete for attention. Without it, one badly behaved program could hog the CPU like a karaoke singer who thinks every song needs a second verse.

Scheduling involves tradeoffs.

A scheduler may care about responsiveness, throughput, fairness, priority, latency, power usage, real-time constraints, or keeping the user interface from freezing in the most visible and embarrassing possible way.

There is no one perfect scheduling policy for every situation.

A desktop operating system wants responsiveness.

A server may care about throughput.

A real-time system may care about deadlines.

A phone may care about battery life.

A cloud workload may care about cost, isolation, and predictable resource sharing.

The machine is always making choices. Systems programming is learning which choices are being made underneath you.

Blocking and waiting: why programs pause

A thread can be running, ready, or blocked.

Running means it is currently executing.

Ready means it could execute if the scheduler gives it CPU time.

Blocked means it is waiting for something else.

This distinction is central to real software.

Imagine a web server.

If it handled one request at a time, then a slow database query from one user could make everyone else wait. That would be bad. Users do not appreciate being told, “Please remain calm while another person’s shopping cart considers its life choices.”

Instead, servers often handle many requests concurrently.

One request waits for a database.

Another request reads from the network.

Another prepares a response.

Another logs data.

Another checks authentication.

Concurrency allows useful progress while some tasks are waiting.

This is why concurrency matters even when you do not have lots of CPU-heavy work.

Many programs spend a lot of time waiting on I/O.

Disk, network, user input, databases, APIs, cloud services, and file systems are all slower than CPU instructions. Vastly slower. The CPU can do a ridiculous amount of work while your program waits for a network packet to wander back from somewhere with opinions.

Concurrency lets the program avoid wasting that waiting time.

Processes vs threads: the useful comparison

Processes are heavier but more isolated.

Threads are lighter but more tightly connected.

A process has its own memory space. Creating a process can be more expensive. Communication between processes usually requires operating system help, such as pipes, sockets, shared memory, files, or message queues.

A thread shares memory with other threads in the same process. Creating a thread is usually cheaper than creating a full process, and communication can be faster because shared data is directly accessible.

But shared memory is also where trouble enters wearing tap shoes.

Here is the practical summary:

Use processes when isolation matters.

Use threads when shared work inside one program needs efficient cooperation.

Use neither blindly, because concurrency is not seasoning. You do not sprinkle it on top of a program and automatically make it faster. Sometimes you just create a faster mess.

The classic failure: “I added threads and now it is slower”

This happens constantly.

A beginner sees multiple threads and thinks:

More threads equals more speed.

Reasonable guess.

Often wrong.

Threads have overhead. They must be created, scheduled, paused, resumed, synchronized, and sometimes waited on. If the work is too small, the overhead can outweigh the benefit.

Also, parallel work may fight over shared resources:

- the same memory

- the same lock

- the same file

- the same network connection

- the same database

- the same CPU cache

- the same GPU

- the same external API rate limit

- the same poor little logging system trying its best

This is where CS102 Episode 8 — Complexity and Efficiency quietly re-enters the room with a clipboard.

Performance is not just about doing more things.

It is about understanding where the bottleneck is.

If your task is CPU-bound, meaning it spends most of its time computing, parallelism may help if the work can be divided cleanly across cores.

If your task is I/O-bound, meaning it spends most of its time waiting, concurrency may help by letting other work proceed during those waits.

If your task is bottlenecked on a single shared resource, adding threads may simply create a line of angry little workers all waiting at the same locked door.

Synchronization: making shared access safe

If multiple threads share data, you need rules.

Synchronization is the general idea of coordinating access to shared resources so operations happen safely.

Common tools include:

Locks, also called mutexes, which allow only one thread at a time into a critical section.

A critical section is code that accesses shared state and must not be interrupted by another thread doing conflicting work.

Semaphores, which control access to a limited number of resources.

Condition variables, which let threads wait until some condition becomes true.

Atomic operations, which provide small operations that complete indivisibly from the perspective of other threads.

Message passing, where threads or processes communicate by sending data instead of directly sharing everything.

For the counter example, a lock might make the increment safe:

lock.acquire()

counter = counter + 1

lock.release()

Conceptually, this says:

“Only one thread may touch this counter at a time.”

In real code, you usually want safer patterns (like Python with) that release the lock even if something goes wrong:

with lock:

counter = counter + 1

This is better because it reduces the chance that an exception leaves the lock held forever like a dragon sleeping on your program’s front door.

Deadlock: when everyone waits forever

Synchronization solves some problems and introduces others, because computer science enjoys balance.

A deadlock happens when tasks wait on each other in a cycle, and none can proceed.

Imagine this:

Thread A holds Lock 1 and waits for Lock 2.

Thread B holds Lock 2 and waits for Lock 1.

Both are waiting.

Neither can continue.

The program is not technically dead.

It is just staring into the middle distance, holding two clipboards, unable to make a decision.

Deadlocks often require four ingredients:

- mutual exclusion: a resource can be held by only one task at a time

- hold and wait: a task holds one resource while waiting for another

- no preemption: resources cannot be forcibly taken away

- circular wait: tasks form a cycle of dependency

You do not need to memorize that list like a spell yet, but you should understand the vibe:

Deadlocks happen when resource ownership and waiting rules create a trap.

Avoiding deadlock often means using consistent lock ordering, keeping critical sections small, using timeouts, reducing shared state, or choosing designs that avoid locking multiple resources at once.

Starvation and fairness

Deadlock is when nobody in the cycle proceeds.

Starvation is when one task keeps getting denied progress because others repeatedly go first.

Imagine a thread that is technically allowed to run, but higher-priority work keeps arriving. The poor thing remains ready forever, aging in the queue like a support ticket labeled “later.”

Schedulers and synchronization tools often try to balance efficiency with fairness.

But fairness is not free.

A system optimized purely for throughput might let some work wait longer. A system optimized for fairness might reduce raw throughput. A system optimized for responsiveness may favor interactive tasks over background work.

These are tradeoffs.

Real systems are full of them.

Anyone who tells you there is always one obvious answer in systems design is either simplifying for your benefit or selling a conference talk with suspicious confidence.

A real-world example: the web server

Let us make this concrete.

Suppose you build a simple web server.

A user requests a page.

The server must:

- Accept the network connection.

- Parse the request.

- Check authentication.

- Query a database.

- Build a response.

- Send the response.

- Log the request.

Now imagine 1,000 users arrive.

A single-threaded server that handles each request fully before starting the next may struggle badly if each request waits on database or network I/O.

A multi-threaded server might assign different requests to different threads. While one waits for the database, another can parse a request, another can send a response, and another can log.

That can improve throughput and responsiveness.

But now shared resources matter.

The database connection pool has a limit.

The log file may need synchronization.

Shared caches need safe access.

Session state must not be corrupted.

Rate limits still apply.

If too many threads are created, memory usage grows, context switching increases, and the server may spend more time managing work than doing work.

This is why modern servers often use combinations of strategies:

- processes for isolation

- threads for parallel work

- event loops for high-scale I/O

- async programming for managing waiting efficiently

- worker pools for controlled concurrency

- queues for smoothing bursts of work

- locks or atomics for shared state

- databases designed to handle concurrent access safely

The exact architecture depends on the workload.

A chat app, image processor, AI inference service, payment processor, game server, and file backup system all have different concurrency needs.

Same theme. Different dragons.

Event loops and async: concurrency without one thread per task

Threads are not the only way to handle concurrency.

Many modern systems use an event loop.

Instead of creating one thread for every task, an event loop keeps track of operations that are waiting and resumes work when events occur.

This is common in JavaScript runtimes, async Python frameworks, high-performance networking systems, GUI applications, and many server environments.

The idea is:

Start an operation.

If it must wait, do not block the whole program.

Register interest in the result.

Move on to other work.

When the result is ready, resume the appropriate logic.

This is great for I/O-heavy workloads.

But it does not magically make CPU-heavy work disappear. If you do a long CPU-bound computation on a single event loop thread, you can block everything else. That is how a nice responsive app becomes a frozen rectangle of regret.

Async programming solves one class of waiting problems.

It does not repeal physics.

Shared memory vs message passing

One major design choice in concurrent systems is how workers communicate.

With shared memory, multiple threads access common data structures. This can be fast, but it requires careful synchronization.

With message passing, workers communicate by sending messages. This can reduce shared-state bugs because ownership is clearer: one worker sends data, another receives it.

Message passing appears in many places:

- actor systems

- queues

- microservices

- distributed systems

- browser workers

- operating system services

- cloud event pipelines

- AI workflow orchestration

Message passing can make systems easier to reason about, but it has costs too. Messages must be serialized, transmitted, queued, copied, retried, or ordered. Failures become more distributed. Debugging moves from “who changed this variable?” to “where did this message go, and why is it wearing a fake mustache?”

Again: tradeoffs.

Shared memory is fast and dangerous.

Message passing is cleaner and sometimes heavier.

The right answer depends on the system.

Concurrency and AI-era software

Concurrency has become even more important in modern AI and cloud workflows.

Consider an AI-powered application.

A single user request might involve:

- receiving a web request

- checking user permissions

- fetching context from a database

- calling a model API

- streaming tokens back to the browser

- logging usage

- updating billing

- writing conversation state

- retrieving files

- running moderation or safety checks

- calling tools

- handling retries if a remote service fails

Much of that work involves waiting.

Waiting for network calls.

Waiting for storage.

Waiting for a model response.

Waiting for another service to answer.

Without concurrency, one user’s slow request could tie up resources badly. With careful concurrency, the system can continue serving other users while slow operations are pending.

But AI workflows also bring new hazards.

If multiple tool calls update the same document, calendar, database record, or shopping cart, order matters.

If a background job retries after a timeout, it might perform the same operation twice unless the system is designed to be idempotent.

If many requests hit the same vector database, cache, or model endpoint, bottlenecks appear.

If streaming output is interrupted, the system must decide what state was actually committed.

Concurrency is not some dusty operating systems topic from the age of beige computers and keyboards loud enough to scare pets.

It is inside modern software everywhere.

Especially the parts with cloud services, queues, APIs, agents, tools, databases, and users clicking things twice because the spinner looked suspicious.

Misconception: “The lines run in the order I wrote them”

In a single thread, most of the time, that mental model is useful.

In concurrent programs, it becomes dangerous.

Yes, each thread has an order.

But the whole program may involve many interleaved orders.

Thread A may execute line 1.

Thread B may execute line 1.

Thread A may execute line 2.

Thread C may wake up.

Thread B may modify shared state.

Thread A may continue using a value that is now stale.

The code order you see in one file is not necessarily the full execution order of the system.

This is a major conceptual jump.

A concurrent program is not just a list of instructions.

It is a set of possible interleavings.

Correct concurrent code must work across the interleavings that can actually happen, not just the one you imagined while sipping coffee and feeling briefly optimistic.

Misconception: “Locks make everything safe”

Locks can make shared access safe.

They can also make programs slow, stuck, fragile, or impossible to understand after the fourth nested synchronization block.

A lock protects only what all relevant code agrees it protects.

If one part of the code uses the lock and another part casually ignores it, the lock is not a guardian. It is a decorative traffic cone.

Locks also need careful scope.

Too little locking: race conditions.

Too much locking: poor performance or deadlock.

Wrong lock order: deadlock.

Holding locks during slow I/O: everyone waits.

Locking around code that calls unknown callbacks: surprise chaos.

Good concurrent design often means reducing the amount of shared mutable state in the first place.

The best race condition is the one you designed out of existence before it learned your address.

Misconception: “If it works on my machine, it is thread-safe”

Nope.

Thread bugs are often timing-sensitive.

Different CPUs, operating systems, compiler optimizations, workloads, core counts, background processes, and runtime environments can reveal bugs you never saw locally.

A race condition may disappear when you add logging because logging changes timing.

This is particularly rude.

You add print statements to find the bug, and the bug puts on a little hat and leaves.

Then you remove the print statements, and it returns with luggage.

This is why concurrency bugs require disciplined thinking, not just vibes and console output.

Testing helps, but it is not enough by itself. Design matters. Code review matters. Static analysis can help. Stress tests can help. Deterministic concurrency testing can help. Clear ownership rules help. Avoiding unnecessary shared state helps a lot.

A small design lesson: ownership matters

One of the most useful questions in concurrent software is:

Who owns this data?

If everyone owns it, nobody owns it.

And then the bug owns it.

Clear ownership makes systems easier to reason about.

Maybe one thread owns a queue.

Maybe one worker owns a database transaction.

Maybe each request owns its local state.

Maybe shared state is immutable, so nobody can corrupt it.

Maybe updates flow through a single coordinator.

Maybe messages are copied instead of shared.

The specific pattern varies, but the principle is stable:

The more precisely you define who can read, write, and coordinate data, the fewer mysterious timing goblins you invite into the walls.

How this connects to operating systems

Next episode, we will step more directly into operating systems.

Processes, threads, and scheduling are a perfect bridge.

The operating system is not just “that thing behind the desktop.”

It is the manager of execution.

It decides:

- what runs

- when it runs

- what memory it can access

- what files it can open

- what devices it can use

- what happens when it waits

- what happens when it crashes

- what happens when it asks for more resources

- what happens when another process is also making demands

In other words, the operating system is the adult in the room.

Not always a calm adult.

Sometimes an adult with 900 unread emails and a printer jam.

But still the adult.

Processes and threads are how programs become scheduled work inside that larger system.

The historical note, without turning this into a plaque

Early computers often ran one program at a time.

That made life simpler, in the same way living alone in a cave makes roommate conflict simpler.

As machines became more expensive, more powerful, and more central to institutions, it became important to share them efficiently. Time-sharing systems allowed multiple users and jobs to interact with one machine. Operating systems evolved to manage processes, scheduling, memory protection, and I/O coordination.

Later, personal computers brought interactive multitasking to everyday users.

Then multi-core processors made parallel execution ordinary.

Then cloud systems, web servers, distributed databases, mobile apps, and AI services made concurrency impossible to avoid unless your software lives in a sealed jar labeled “single-user demo, please do not touch.”

The history matters because concurrency is not a random complexity added to make students sad.

It is a response to a real need:

Computers must manage many tasks, many users, many devices, many delays, and many sources of work.

The trick is making that possible without letting everything collide.

Practical advice for beginners

When learning concurrency, start small.

Do not begin by building a distributed real-time trading platform powered by 80 threads and confidence.

Start with one shared counter.

Then a producer-consumer queue.

Then a thread pool.

Then a simple server.

Then async I/O.

Then locks, deadlocks, and race conditions.

Learn to ask:

What work can happen independently?

What data is shared?

Who owns each piece of data?

What operations must be atomic?

What happens if this task pauses here?

What happens if two requests arrive at once?

What happens if this operation retries?

What happens if it fails halfway?

What happens if it succeeds but the response is lost?

Those questions are not pessimism.

They are engineering.

A little suspicion is healthy. Software rewards the kind of optimism that writes code and the kind of pessimism that tests the doors.

The big idea

Processes are running programs with protected resources.

Threads are flows of execution inside a process.

Concurrency lets multiple tasks make progress during the same period.

Parallelism lets multiple tasks execute at the same instant.

Scheduling decides who runs when.

Shared state creates coordination problems.

Race conditions happen when timing affects correctness.

Locks and synchronization help, but they introduce tradeoffs.

Good concurrent design is not about adding more threads until the graph goes up. It is about understanding work, waiting, ownership, state, and coordination.

That is the real lesson.

A computer is not merely fast because it does one thing quickly.

It is useful because it manages many things at once without collapsing into a tiny opera of broken assumptions.

Mostly.

Your job as a programmer is to understand where the illusion comes from, where it can break, and how to design software that survives the fact that modern programs do not politely take turns.

If this helped the machine feel a little less mysterious and a little more like a very organized traffic problem with electricity, follow along for the next episode. And if concurrency has ever hurt your feelings personally, comment with the bug that made you question your career, your clock speed, or the moral character of shared memory.

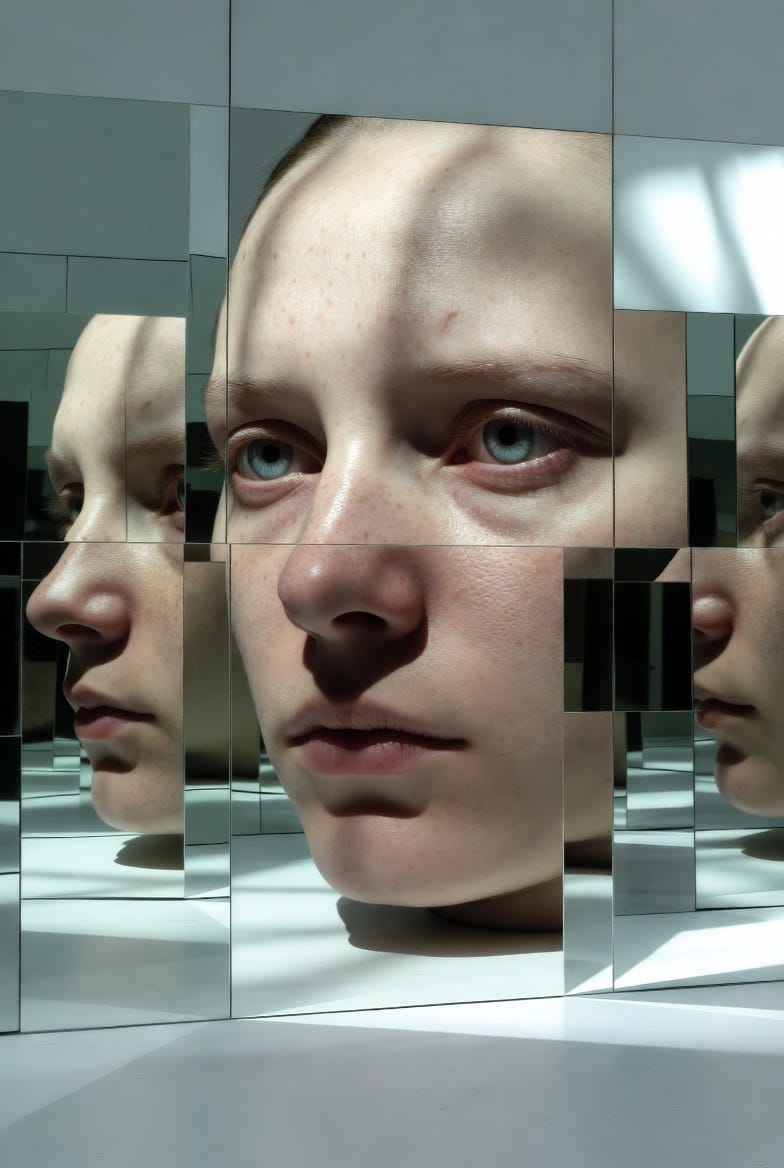

A monumental hyperrealist portrait-inspired scene showing a solitary figure reflected in a grid of glossy glass panels, each panel revealing a slightly different angle of light, texture, and expression; use sharp photographic detail, enlarged facial scale, cool studio whites, muted skin tones, soft gray shadows, and precise surface reflections. The composition should feel intimate but imposing, with cropped framing, intense stillness, visible pores, subtle imperfections, mirrored edges, and a quiet psychological tension, as if identity itself has been divided into clean luminous fragments. Avoid direct references to computers, code, machinery, or office settings.

Begin with a rapid snap-cut between glass reflections, each panel catching a different flash of light across the hyperreal face. Let the reflections pulse in rhythmic sequence, like a visual beat, while tiny highlights travel across the glass grid and the figure’s expression shifts almost imperceptibly. Add quick focus pulls, crisp light flickers, and a final synchronized shimmer where all panels briefly align into one intense portrait before separating again into fractured reflections. Keep the motion stylish, hypnotic, high-contrast, and catchy for short-form video.

Song Recommendations:

Safe and Sound — Capital Cities

Sweet Nothing — Calvin Harris feat. Florence Welch